Every CEO knows the math. Automate too fast, displace too many workers, and eventually the people who buy your products can’t afford them anymore. The demand cliff is visible to everyone. So why are companies racing toward it anyway?

That’s the question at the heart of a striking new paper — The AI Layoff Trap — by economists Brett Hemenway Falk (UPenn) and Gerry Tsoukalas (Boston University). Their answer is rigorous, uncomfortable, and has direct implications for how we think about AI policy.

The Trap, Explained

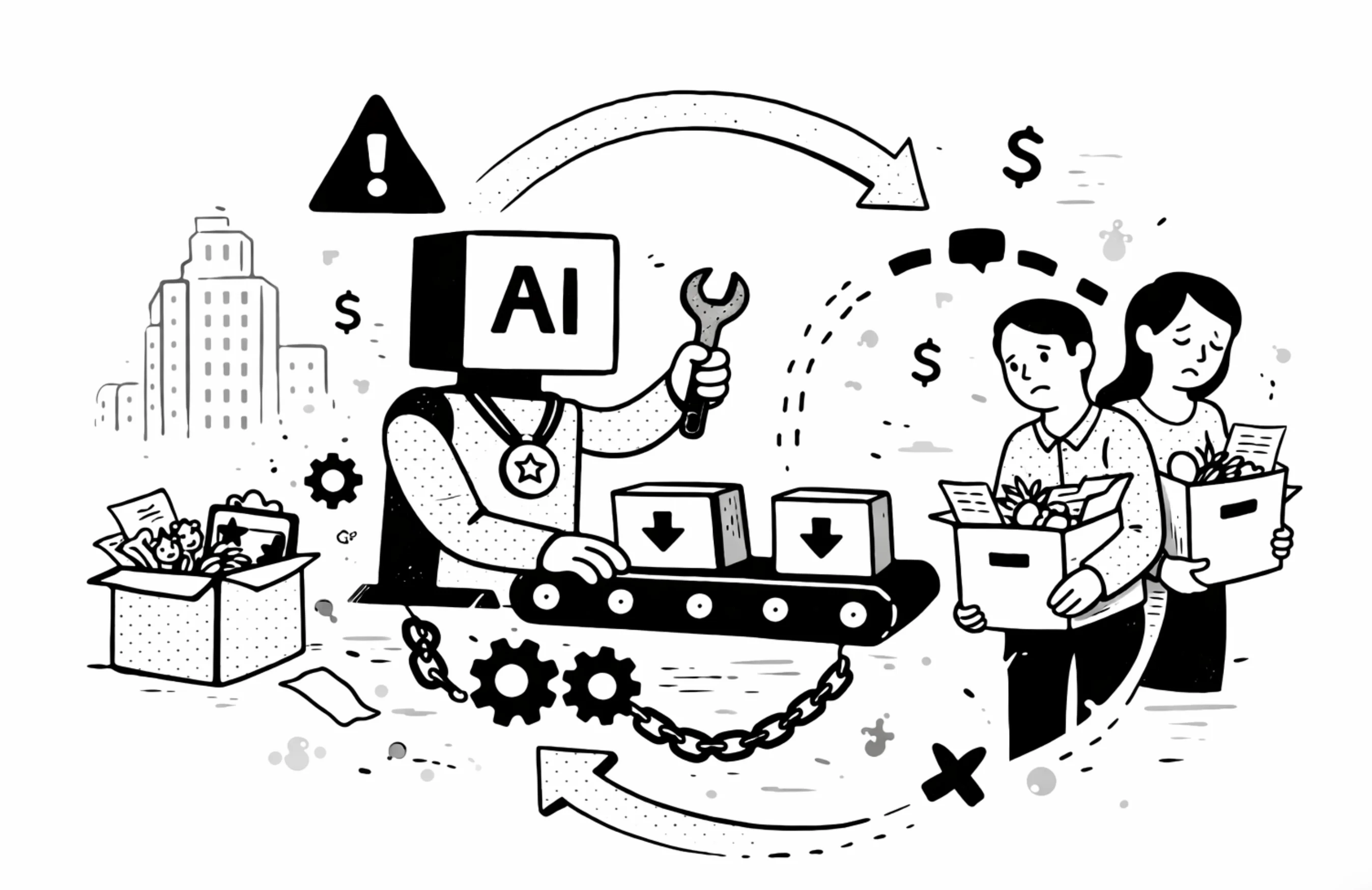

The core mechanism is a demand externality. When a firm automates a task and lays off a worker, it captures 100% of the cost savings. But the demand destruction — the spending that worker would have done in the economy — gets spread across all firms equally.

So each firm bears only 1/N of the damage it causes, where N is the number of competitors in its market. The other (N-1)/N falls on rivals.

This creates a classic collective action problem, but with a twist: it’s not a coordination failure. It’s a dominant strategy. Even if every CEO in a room agrees that collective restraint would be better for everyone — even if they all know the cliff is coming — each one still has an individual incentive to automate more than is socially optimal. Knowing the trap exists is not enough to escape it.

The math is unforgiving. In the frictionless limit, it collapses into a pure Prisoner’s Dilemma: every firm displaces its entire workforce, profits fall for everyone, and the only winner is nobody. Both workers and firm owners end up worse off than if they’d cooperated.

The Red Queen Effect: Better AI Makes It Worse

Here’s the part that should alarm anyone betting that technological progress will self-correct the problem.

The paper shows that higher AI productivity — AI that doesn’t just cut costs but also boosts output per task — widens the over-automation wedge, not narrows it.

Why? Because when AI makes a firm more productive, it also creates a market-share incentive: automate more than your rivals and you capture a larger slice of total spending. At the symmetric equilibrium, every firm does this simultaneously, so the market-share gains cancel out. But the demand destruction doesn’t. Each round of “better AI” raises the private incentive to automate without changing the socially optimal rate — the gap just keeps growing.

This is what the paper calls the Red Queen Effect: firms run faster and faster just to stay in the same place, while collectively destroying more and more demand.

What Doesn’t Work

The paper evaluates six commonly proposed solutions. The results are sobering:

Universal Basic Income — Raises the floor on living standards, but adds a constant to demand without touching the marginal incentive to automate. Firms automate at exactly the same rate. UBI is a palliative, not a cure. Worse: by raising profits, it attracts more entrants, fragments the market further, and can actually widen the gap.

Capital income taxes — A profit tax scales the entire profit function by (1 − t), which cancels out of the first-order condition. It literally doesn’t affect automation decisions.

Worker equity / profit-sharing — This one actually helps, by recycling some demand back through worker spending. But it can’t close the gap unless workers receive more than 100% of profits (when consumer spending propensity λ < 1). Even mandated full profit-sharing leaves a residual wedge.

Upskilling / retraining — Effective at narrowing the externality if it genuinely reabsorbs displaced workers at comparable wages. But historically, displaced workers suffer large, persistent earnings losses. There’s no evidence yet that AI-driven displacement will be different.

Wage adjustment — The conventional wisdom is that wages will fall as displaced workers flood the labor market, narrowing the cost advantage of automation and slowing the race. The paper confirms this partially: falling wages do raise the threshold at which the externality activates. But once it does, wage flexibility changes when the problem bites, not whether it exists. And “self-correction” via wage depression just replaces displacement with poverty — hardly a win.

Coasian bargaining — Can’t firms just agree among themselves to restrain? No. Because automation is a dominant strategy, any voluntary agreement is immediately undercut by individual incentives to defect. Communication is cheap talk. Even a coalition of M < N firms helps only in proportion to their market share — the grand coalition needed to fully solve it will never voluntarily form.

What Actually Works

One thing: a Pigouvian automation tax.

The logic is direct. Each firm already internalizes 1/N of the demand loss from its own automation. The tax charges it for the remaining (1 − 1/N) it’s currently externalizing onto rivals. For large markets, the optimal rate converges to approximately λ(1−η)w — computable from sector-level observables.

This isn’t a radical idea. It’s the same logic we use for carbon taxes. When a firm’s private action imposes costs on others that the market doesn’t price, you price them. The automation tax implements the cooperative optimum as a Nash equilibrium — each firm, facing the corrected cost signal, chooses the socially optimal automation rate without needing to coordinate.

The tax revenue, recycled into retraining programs, becomes self-limiting over time: better reabsorption raises the income replacement rate, which shrinks the externality, which reduces the required tax rate.

Who’s Most at Risk

The paper delivers a counterintuitive empirical prediction: the problem is worst not at dominant tech firms, but in fragmented, competitive industries deploying powerful AI.

A monopolist fully internalizes the externality — it bears the full demand loss from its own automation decisions. As markets fragment (more competitors, each with a smaller share), the wedge grows. The most competitive markets with the best AI tools create maximum displacement, not optimal outcomes.

The telltale empirical signature: profit erosion coinciding with mass layoffs. Standard economics predicts that cost-cutting technology raises profits. If firms are automating aggressively and profits are still falling, that’s the demand externality at work. The paper points to customer support, software services, and financial back-office operations as the most transparent testing grounds.

The Policy Implication

Most AI policy debate focuses on the aftermath: retraining funds, income support, social safety nets. This paper reframes the question entirely.

The issue isn’t just that displacement is painful. It’s that competitive incentives drive firms to displace more workers than is collectively rational — and no amount of compassion, coordination, or conventional policy can fix that without addressing the incentive structure directly.

Even a policymaker who doesn’t care at all about worker welfare — only about aggregate profits — would still want to reduce automation below the market equilibrium. The Nash outcome is Pareto dominated. Everyone loses.

The conclusion is stark: policy must address not just what happens to workers after automation, but the competitive dynamics that produce excess automation in the first place. The market failure has a name, a mechanism, and a known remedy. The only open question is whether policymakers will act before the trap fully springs.

“The AI Layoff Trap” — Brett Hemenway Falk & Gerry Tsoukalas, arXiv:2603.20617 (March 2026)